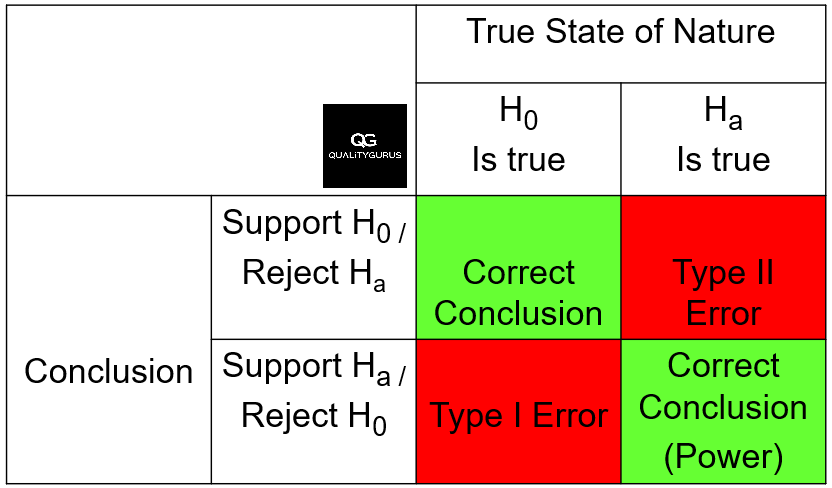

In hypothesis testing, two types of errors can occur: type I and type II. These errors refer to the incorrect rejection or acceptance of the null hypothesis respectively.

Type I Error (Alpha)

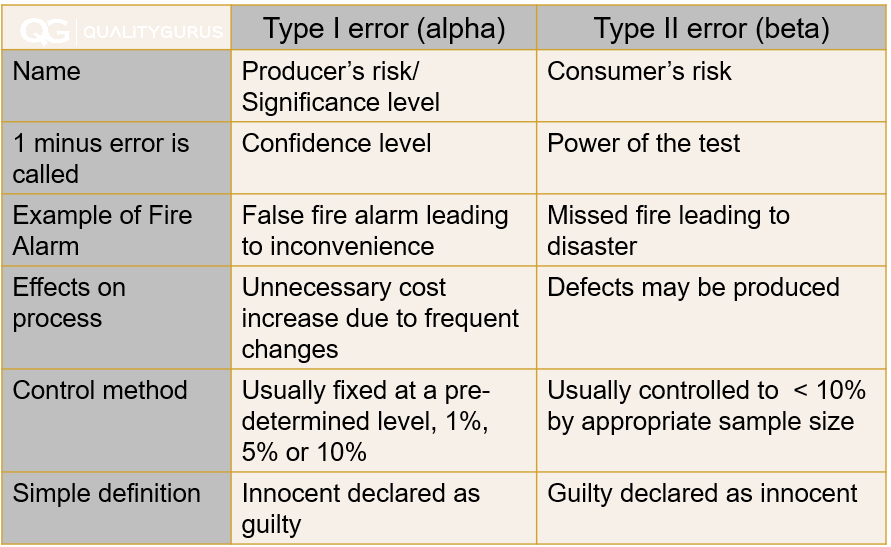

A type I error occurs when the null hypothesis is true but is rejected in favour of the alternative hypothesis. This error is also known as a false positive, alpha error or a producer's risk. It represents the probability of rejecting the null hypothesis when it is true, and the significance level of the test controls it.

For example, if the null hypothesis is that the population mean is equal to 10, and the alternative hypothesis is that the population mean is not equal to 10, a type I error would occur if the test rejects the null hypothesis when the population mean is actually equal to 10.

The probability of making a type I error is typically set at 0.05 or 0.01. This means that there is a 5% or 1% probability of rejecting the null hypothesis when it is true.

(1 - Type I Error) is the Confidence Level. The confidence level measures how confident we are that the results of a statistical test are reliable. For example, a confidence level of 95% means there is a 5% chance that the null hypothesis will be rejected, even though it is true. In other words, the confidence level measures the likelihood of a statistical test's results being correct, given that the null hypothesis is true. A higher confidence level means we are more confident in the test results, while a lower level means less confidence in the results.

Type II Error (Beta)

A type II error occurs when the null hypothesis is false but is not rejected in favour of the alternative hypothesis. This error is also known as a false negative, beta error or a consumer's risk. It represents the probability of not rejecting the null hypothesis when it is false, and it is related to the power of the test.

For example, if the null hypothesis is that the population mean is equal to 10, and the alternative hypothesis is that the population mean is not equal to 10, a type II error would occur if the test fails to reject the null hypothesis when the population mean is actually not equal to 10.

The probability of making a type II error is represented by the power of the test, which is the probability of correctly rejecting the null hypothesis when it is false. The power of a test can be calculated using the sample size, the significance level, and the magnitude of the effect being studied.

In summary, type I and type II errors can occur in hypothesis testing when the null and alternative hypotheses are incorrectly rejected or accepted, respectively.

(1 - Type II Error) is the power of the test. The power of the test is the probability of correctly rejecting the null hypothesis when it is false. For example, a test with 80% power means that there is an 80% chance that the test will correctly reject the null hypothesis when it is false. Higher power means the test is more sensitive to detecting the true effect or relationship. A lower power means that the test is less sensitive to detect the true effect or relationship.

Balancing Type I and Type II Errors

Generally, it is important to consider the risks of type I and II errors when designing and conducting hypothesis tests. A low probability of making a type I error is desirable, as the test has high statistical significance and is less likely to reject the null hypothesis when it is true.

However, a low probability of making a type II error is also essential, as the test has high power and is more likely to reject the null hypothesis when it is false.

Decreasing the Type I error rate (also known as the "significance level" or "alpha") means that there is less chance that the null hypothesis will be rejected, even though it is true. But as s a result of this, the Type II error rate (also known as the "beta") will increase because there is a higher probability of not rejecting the null hypothesis when it is false.

One option to reduce types I and II errors is to increase the sample size. With a larger sample size, there is more statistical power to detect an effect or relationship between variables, which can help to reduce the probability of both types of errors.

Balancing the risks of type I and II errors is essential in designing and interpreting hypothesis tests.

Type I and II Errors in the Context of Court of Law

A type I error is a false positive, meaning an innocent person is declared guilty. A type II error is a false negative, meaning a guilty person is declared innocent.

Type I and II Errors in the Context Fire Alarm

A fire alarm is a good example of how type I and type II errors can occur. A type I error would be a false fire alarm, leading to inconvenience and unnecessary costs. A type II error would be a missed fire, leading to disaster.